|

From whirling ceiling fans to ticking clocks, the sounds that we hear subtly vary as we move through a scene. We ask

whether these ambient sounds convey information about 3D scene structure and, if so, whether they provide a useful

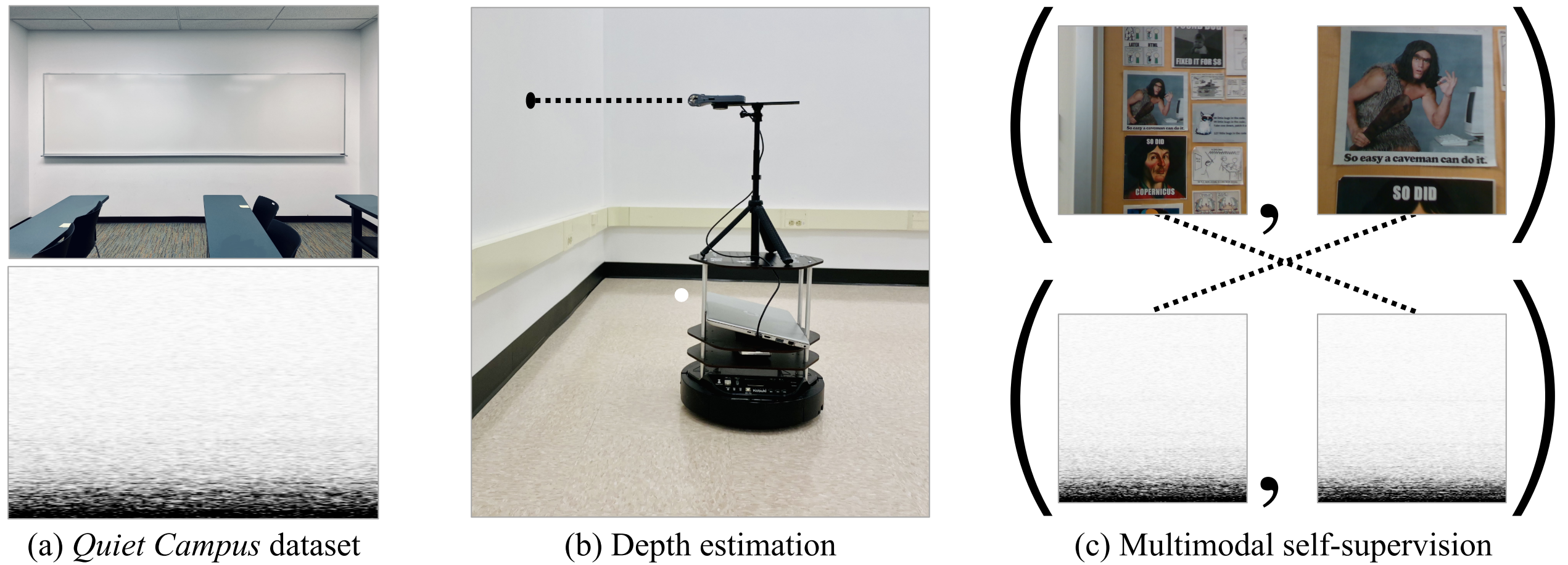

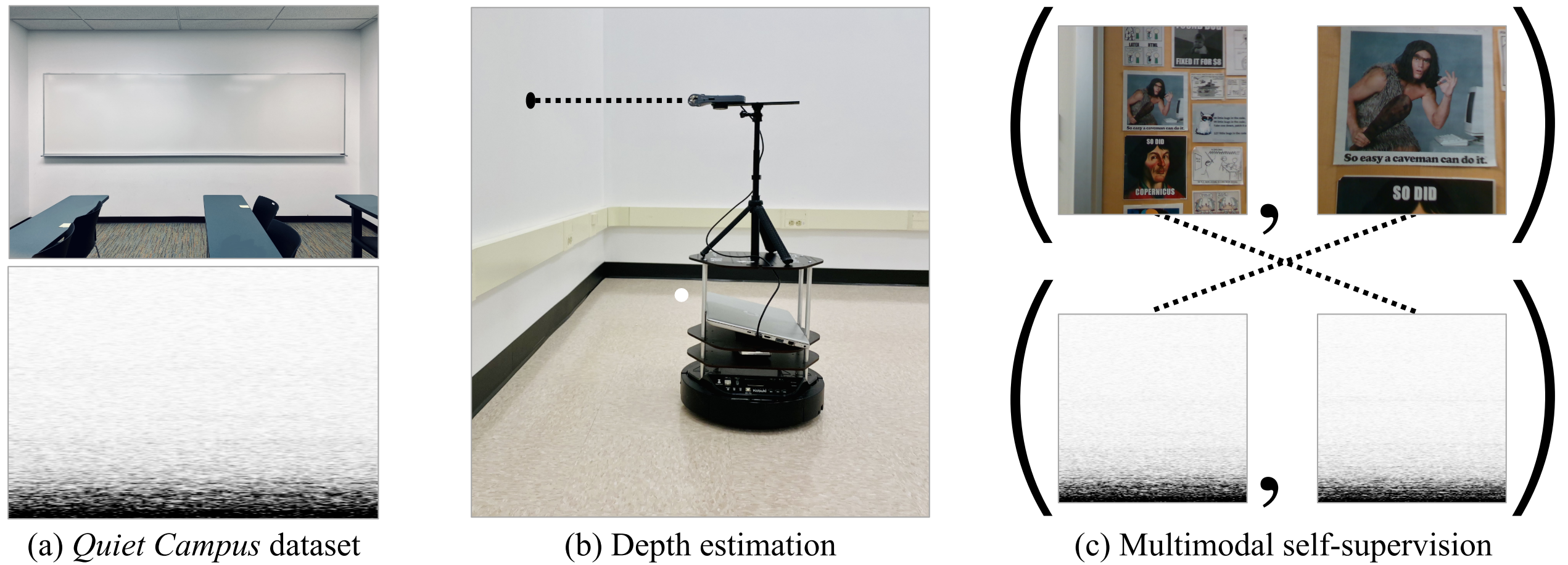

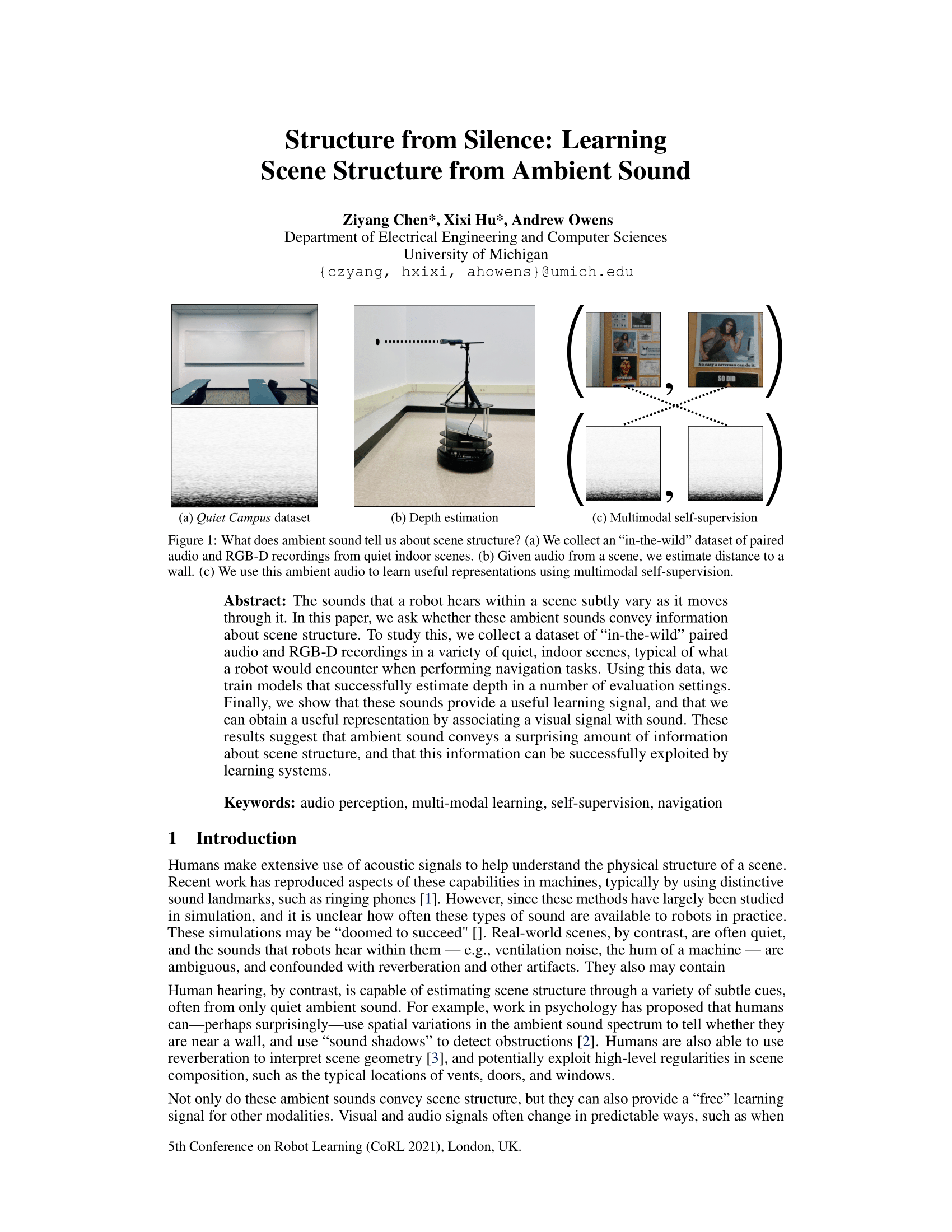

learning signal for multimodal models. To study this, we collect a dataset of paired audio and RGB-D recordings from a

variety of quiet indoor scenes. We then train models that estimate the distance to nearby walls, given only audio as

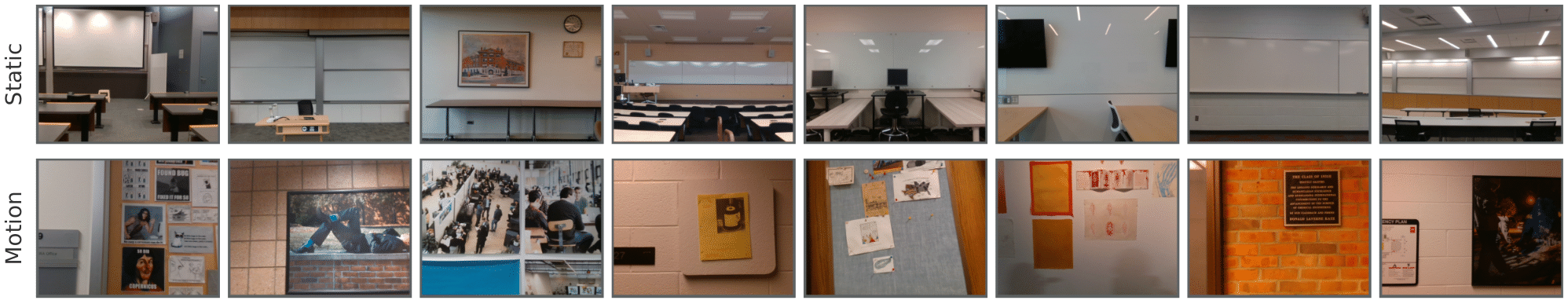

input. We also use these recordings to learn multimodal representations through self-supervision, by training a network

to associate images with their corresponding sounds. These results suggest that ambient sound conveys a surprising

amount of information about scene structure, and that it is a useful signal for learning multimodal features.

|